Artificial Intelligence: All Systems are Go

By Nicholas Curcio, 04/03/16

“Go” is an Ancient Chinese board game known to be one of the most complex and cerebral games in existence. It is played on a 9×19 board, on which 2.082 × 10^170 (i.e., a 2 followed by 170 zeroes) unique legal moves can be played (there are about 10^80 atoms in the universe!). Go has its roots in Zhou dynasty China, and along with calligraphy, painting, and musical instruments, was considered one of the four cultivated arts of Chinese culture. This week, perennial Go champion, Lee Sedol, was beaten 3-2 in a best of 5 series by AlphaGo, a Google DeepMind computer program, marking a significant victory in artificial intelligence development. Humanity is opening Pandora’s Box with Artificial Intelligence; we must make sure to monitor, but not overly regulate its progress in order to protect ourselves from unintended consequences while not prohibiting its benefits.

On the surface, what seems like a trivial technology fun fact may have disturbingly deeper implications. Computers are getting really, really advanced. Watch an IBM Watson commercial, where Watson remarks that it can read 800,000,000 pages per second, learning centuries of human interactions and tendencies in the time it took you to open a new tab on your computer -- and try not to feel a bit unnerved. The fact is, advanced computers can perform many functions better than a human can, from machines automating low-skill jobs to complex data analysis. This has many people nervous about technology hurting the labor force, a fear manifested long ago in the Luddite riots of 19th century England, in which textile workers destroyed factory machinery to gain bargaining leverage.

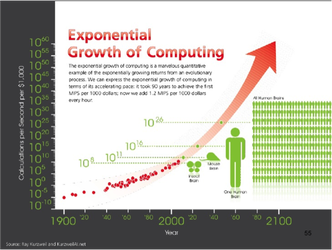

Gordon E. Moore, co-founder of Intel, theoretically suggested and empirically demonstrated that the number of transistors in a dense integrated circuit would double every 18-24 months. Translated to English, this implies that modern technology will theoretically double in capability during this same timespan. The exponential growth of technology in the 21st century may one day lead to robotic “self awareness”, at which point robots could make independent decisions and pursue objectives they create. Self-awareness would lead to recursive self improvement; similar to you getting around to reading that self-help book or finally starting yoga, computers will be able to autonomously improve themselves and become faster and more powerful. This confluence of thought and self-awareness is called “The Singularity”. The Singularity is not far away; at the 2012 Singularity Summit, Oxford mathematician and philosopher Stuart Armstrong conducted a study of artificial intelligence predictions by field experts and derived a median year of 2040. The end result is an autonomous “race” of technology with capabilities “unfathomable to human intelligence”. However, it is important to note many computer scientists dismiss the idea that technology will continue to progress at the rate Moore proposed, meaning Singularity is father off than Armstrong suggested.

So, we as policy analysts must ask ourselves: how do we protect against the dystopian downsides of AI, while enabling people and firms to use technology to their maximum benefit? The answer lies in one's ethical system of beliefs, one’s inherent optimism or pessimism. To some, artificial intelligence beckons negative imagery of computers revolting against humans: Hal from Space Odyssey, Cylons from BattleStar Galactica, SkyNet from Terminator. To be fair, AI takeover could be a legitimate concern, one that will call for a new paradigm on computers as humans rethink our relationship with our newly sentient counterparts. Technological pessimists will favor harshly regulating or banning artificial intelligence developments, but this is the wrong approach. I subscribe to the idea that humans and technology must grow together, using one another to reach its own full potential. By allowing technology to grow unhinged, humanity can continue to reap the ever increasing benefits it yields, bringing millions out of poverty and improving the global standard of living at a pace unseen in human history. But in the new era of AI, governments must extensively monitor the thoughtful independence of machines. A human/AI power-struggle is something no one wants.

The relationship between humans and technology will continue to grow more complex in the 21st century, guiding humanity to new heights and opening new doors. Computers can unleash human potential on an amazing scale, allowing us to be more efficient and intelligent about decisions in business, government, and even medicine. But we must first solve the ethical dilemma of how to control artificial intelligence as it inevitably outpaces human knowledge. Humanity must be prudent in not being its own Frankenstein, creating a monster we may one day not be able to control.

“Go” is an Ancient Chinese board game known to be one of the most complex and cerebral games in existence. It is played on a 9×19 board, on which 2.082 × 10^170 (i.e., a 2 followed by 170 zeroes) unique legal moves can be played (there are about 10^80 atoms in the universe!). Go has its roots in Zhou dynasty China, and along with calligraphy, painting, and musical instruments, was considered one of the four cultivated arts of Chinese culture. This week, perennial Go champion, Lee Sedol, was beaten 3-2 in a best of 5 series by AlphaGo, a Google DeepMind computer program, marking a significant victory in artificial intelligence development. Humanity is opening Pandora’s Box with Artificial Intelligence; we must make sure to monitor, but not overly regulate its progress in order to protect ourselves from unintended consequences while not prohibiting its benefits.

On the surface, what seems like a trivial technology fun fact may have disturbingly deeper implications. Computers are getting really, really advanced. Watch an IBM Watson commercial, where Watson remarks that it can read 800,000,000 pages per second, learning centuries of human interactions and tendencies in the time it took you to open a new tab on your computer -- and try not to feel a bit unnerved. The fact is, advanced computers can perform many functions better than a human can, from machines automating low-skill jobs to complex data analysis. This has many people nervous about technology hurting the labor force, a fear manifested long ago in the Luddite riots of 19th century England, in which textile workers destroyed factory machinery to gain bargaining leverage.

Gordon E. Moore, co-founder of Intel, theoretically suggested and empirically demonstrated that the number of transistors in a dense integrated circuit would double every 18-24 months. Translated to English, this implies that modern technology will theoretically double in capability during this same timespan. The exponential growth of technology in the 21st century may one day lead to robotic “self awareness”, at which point robots could make independent decisions and pursue objectives they create. Self-awareness would lead to recursive self improvement; similar to you getting around to reading that self-help book or finally starting yoga, computers will be able to autonomously improve themselves and become faster and more powerful. This confluence of thought and self-awareness is called “The Singularity”. The Singularity is not far away; at the 2012 Singularity Summit, Oxford mathematician and philosopher Stuart Armstrong conducted a study of artificial intelligence predictions by field experts and derived a median year of 2040. The end result is an autonomous “race” of technology with capabilities “unfathomable to human intelligence”. However, it is important to note many computer scientists dismiss the idea that technology will continue to progress at the rate Moore proposed, meaning Singularity is father off than Armstrong suggested.

So, we as policy analysts must ask ourselves: how do we protect against the dystopian downsides of AI, while enabling people and firms to use technology to their maximum benefit? The answer lies in one's ethical system of beliefs, one’s inherent optimism or pessimism. To some, artificial intelligence beckons negative imagery of computers revolting against humans: Hal from Space Odyssey, Cylons from BattleStar Galactica, SkyNet from Terminator. To be fair, AI takeover could be a legitimate concern, one that will call for a new paradigm on computers as humans rethink our relationship with our newly sentient counterparts. Technological pessimists will favor harshly regulating or banning artificial intelligence developments, but this is the wrong approach. I subscribe to the idea that humans and technology must grow together, using one another to reach its own full potential. By allowing technology to grow unhinged, humanity can continue to reap the ever increasing benefits it yields, bringing millions out of poverty and improving the global standard of living at a pace unseen in human history. But in the new era of AI, governments must extensively monitor the thoughtful independence of machines. A human/AI power-struggle is something no one wants.

The relationship between humans and technology will continue to grow more complex in the 21st century, guiding humanity to new heights and opening new doors. Computers can unleash human potential on an amazing scale, allowing us to be more efficient and intelligent about decisions in business, government, and even medicine. But we must first solve the ethical dilemma of how to control artificial intelligence as it inevitably outpaces human knowledge. Humanity must be prudent in not being its own Frankenstein, creating a monster we may one day not be able to control.